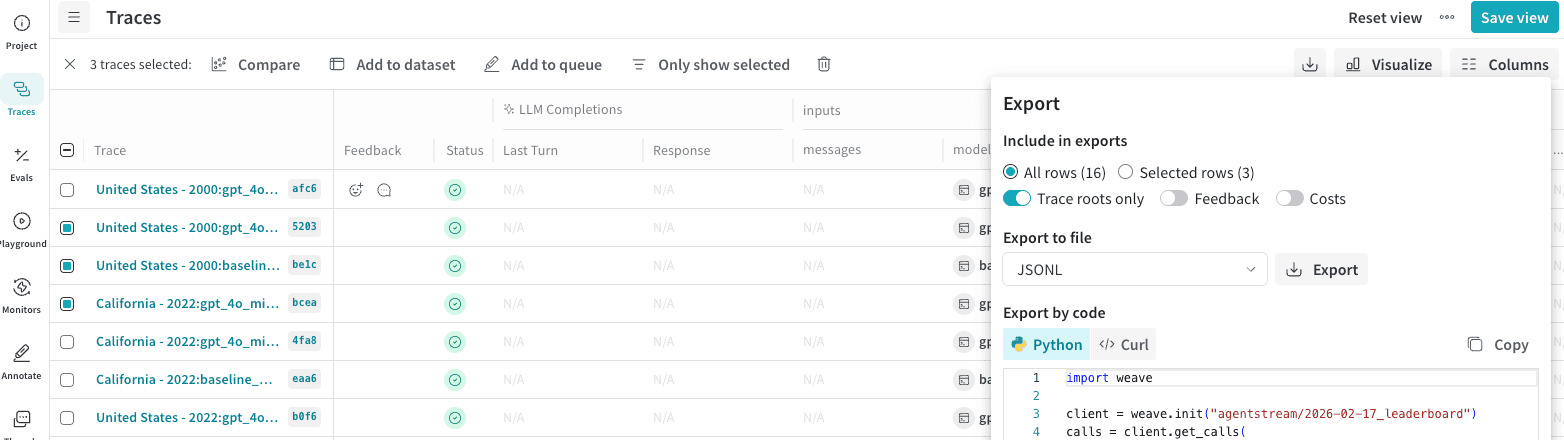

In the Weave UI, you can export your data in multiple formats. It also shows the Python and cURL code that you can use to export the rows programatically. To export Calls:Documentation Index

Fetch the complete documentation index at: https://wb-21fd5541-docs-1917.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

- Navigate to wandb.ai and select your project.

- In the Weave project sidebar, click Traces.

- Select multiple Calls that you want to export by checking the row.

- In the Traces table toolbar, click the export/download button.

- In the Export modal, choose Selected rows or All rows. Click Export.

Fetch calls programmatically

- Python

- TypeScript

- HTTP API

To fetch calls using the Python API, you can use the

client.get_calls method:Export call metrics

You can also use the Weave Service API’s POST/calls/stats endpoint to retrieve metrics about your Calls without retrieving the Call data itself. This allows you to quickly and efficiently retrieve information about your Calls, such as latency and cost, and aggregate them by sum, average, minimum, maximum, and count. For example, you can retrieve:

- Total token usage

- Average latency

- Maximum tokens used

- Total cost

- Minimum input tokens

- Op name

- Trace ID

- Thread ID

- User ID

web_app over a two-day period:

sum, count, avg, min, max, and count.

The endpoint returns a JSON object. The following example response shows two days’ worth of metrics. Each day (bucket) is broken up into its own object in the usage_buckets and call_buckets arrays. Each array breaks down the metrics differently:

usage_buckets: Groups Call metrics for each day by the model used.call_buckets: Groups Call metrics for each day regardless of the model used.